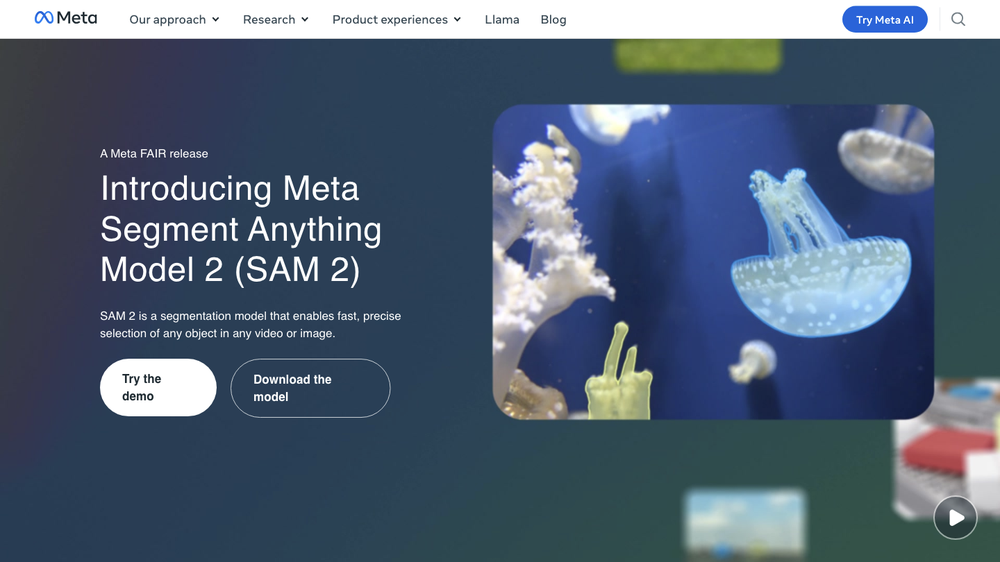

What is Meta Segment Anything Model 2 (SAM 2)?

Meta Segment Anything Model 2 (SAM 2) is a segmentation model that enables fast, precise selection of any object in any video or image.

Key Features of SAM 2

Segment any object, now in any video or image

SAM 2 is the first unified model for segmenting objects across images and videos. You can use a click, box, or mask as the input to select an object on any image or frame of video.

Robust segmentation, even in unfamiliar videos

SAM 2 is capable of strong zero-shot performance for objects, images and videos not previously seen during model training, enabling use in a wide range of real-world applications.

Real-time interactivity and results

SAM 2 is designed for efficient video processing with streaming inference to enable real-time, interactive applications.

State-of-the-art performance for object segmentation

SAM 2 outperforms the best models in the field for object segmentation in videos and images.

How to Use SAM 2

Track an object across any video interactively

Try the demo and track an object across any video interactively with as little as a single click on one frame, and create fun effects.

The Next Generation of Meta Segment Anything

SAM 2 brings state-of-the-art video and image segmentation capabilities into a single model, while preserving a simple design and fast inference speed.

Model Architecture

The SAM 2 model extends the promptable capability of SAM to the video domain by adding a per session memory module that captures information about the target object in the video.

The Segment Anything Video Dataset

A large and diverse video segmentation dataset, SAM 2 was trained on a large and diverse set of videos and masklets (object masks over time), created by applying SAM 2 interactively in a model in the loop data-engine.

Open Innovation

To enable the research community to build upon this work, we’re publicly releasing a pretrained Segment Anything 2 model, along with the SA-V dataset, a demo, and code.

Potential Model Applications

SAM 2 can be used by itself, or as part of a larger system with other models in future work to enable novel experiences.

Helpful Tips

- SAM 2 can be extended to take other types of input prompts such as in the future enabling creative ways of interacting with objects in real-time or live video.

- The video object segmentation outputs from SAM 2 could be used as input to other AI systems such as modern video generation models to enable precise editing capabilities.

Frequently Asked Questions

- How does SAM 2 work? SAM 2 is a segmentation model that enables fast, precise selection of any object in any video or image.

- What are the key features of SAM 2? SAM 2 has several key features, including segmenting any object in any video or image, robust segmentation, real-time interactivity and results, and state-of-the-art performance for object segmentation.

- How can I use SAM 2? You can try the demo and track an object across any video interactively with as little as a single click on one frame, and create fun effects.